94% of business buyers now use AI in their buying process, up from 89% the prior year, with twice as many naming generative AI or conversational search as the most meaningful information source across the buying journey (Forrester, 2025). AEO and GEO share a foundation but diverge on scope, measurement, and ROI timeline, and teams that treat them as interchangeable are misallocating budget on at least one surface.

This guide defines both disciplines, maps where they overlap and where they split, and provides a decision framework for which to prioritize based on your business type and buyer behavior.

AEO Targets Snippet Extraction, Not AI Citation

Answer Engine Optimization (AEO) is the practice of structuring content so search engines extract and display it as a direct answer. The primary targets are Google's featured snippets, People Also Ask boxes, knowledge panels, and voice assistant responses from Siri, Alexa, and Google Assistant.

AEO predates the AI search era. It emerged when Google began displaying "position zero" results that answered user questions directly within the search results page, without requiring a click. The optimization tactics are familiar to anyone who has done SEO: clear Q&A formatting, FAQ schema markup, concise 40 to 60 word answer blocks, and structured data that makes extraction easy.

AEO rewards brevity and precision. A 50-word paragraph that directly answers "how much does CRM software cost" wins the featured snippet. A 2,000-word guide exploring pricing philosophy does not.

GEO Rewards Depth With a 41% Visibility Gain From Statistics

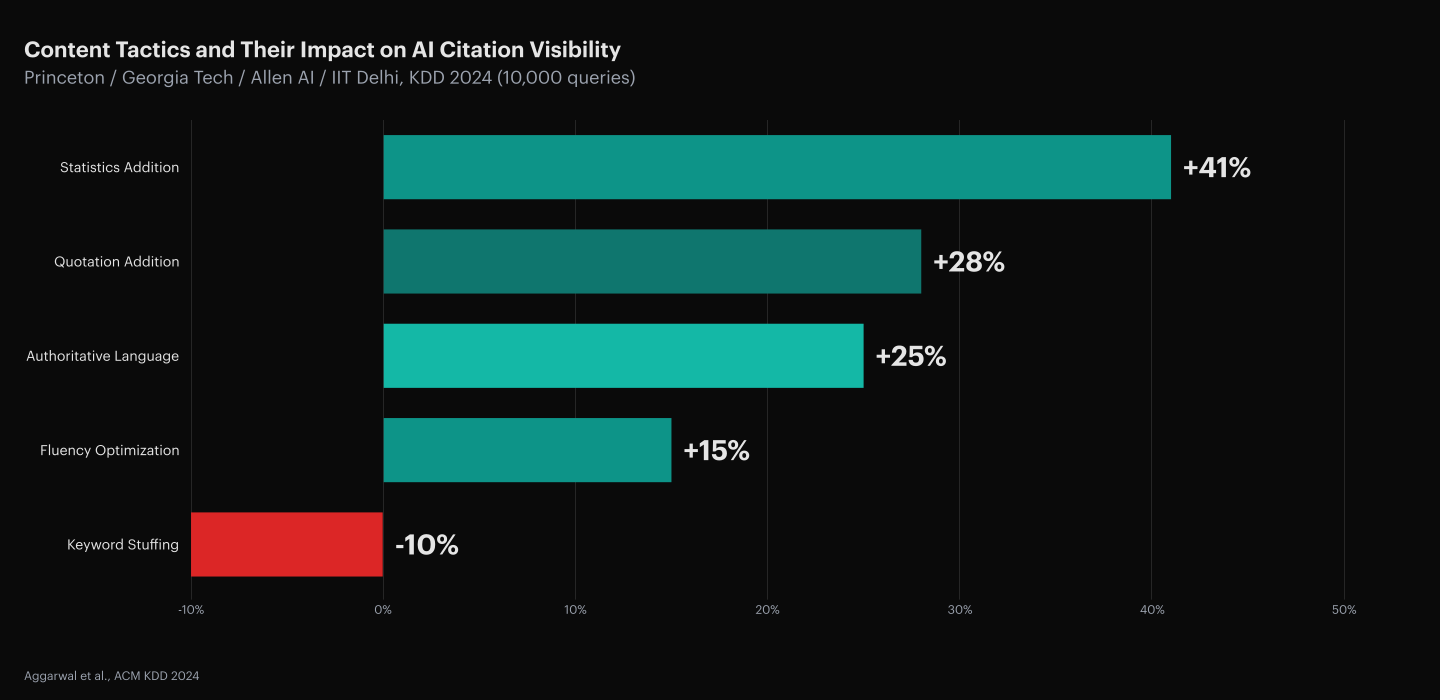

Generative Engine Optimization (GEO) is the practice of structuring content and brand presence so AI-powered platforms cite, recommend, or mention your brand when generating answers. Adding attributed statistics to content improved AI citation visibility by 41%, while keyword stuffing decreased it by 3% (Princeton KDD, 2024).

GEO was formalized as a concept in a 2024 peer-reviewed paper by researchers at Princeton University, IIT Delhi, Georgia Tech, and the Allen Institute for AI, published at the ACM SIGKDD Conference (Aggarwal et al., KDD 2024). The study tested specific content tactics and measured their impact on AI citation likelihood: adding statistics improved visibility by 41%, adding quotations by 28%, and using authoritative language by 25%.

GEO rewards depth, authority, and freshness. A 1,500-word article with comparison tables, attributed statistics, and self-contained sections that each answer a specific buyer question earns AI citations. A 50-word answer block, while perfect for a featured snippet, rarely provides enough substance for an AI engine to cite. The targets are ChatGPT, Perplexity, Google AI Overviews, Claude, and Gemini.

AEO and GEO Share 5 Structural Principles

Both disciplines share the same foundation: content that is structured, authoritative, and directly answers buyer questions. AI-cited content is 25.7% fresher than traditionally ranked organic results (Ahrefs, 2025), a signal that applies to both snippet eligibility and AI citation probability.

| Shared Principle | How AEO Applies It | How GEO Applies It |

|---|---|---|

| Answer-first structure | First sentence directly answers the heading's question | First 1 to 2 sentences provide an extractable answer capsule |

| Clear entity identification | Schema markup defines what the content is about | Named entities (products, companies, people) help AI resolve identity |

| Source credibility | E-E-A-T signals improve snippet eligibility | Named source attribution improves AI citation probability |

| Structured formatting | Lists, tables, and FAQs improve snippet extraction | Tables and self-contained sections improve passage retrieval |

| Content freshness | Updated content wins recency-sensitive snippets | Fresher content earns disproportionate AI citation share |

Because the overlap is so large, Profound's position is that AEO and GEO are "essentially the same strategy." That is a defensible view if your only goal is to produce structured, authoritative content. But it collapses a meaningful strategic distinction that matters for resource allocation.

9 Dimensions Where AEO and GEO Split

The differences emerge in scope, platform, content format, authority signals, and measurement. Only 11% of domains overlap between ChatGPT and Perplexity citation pools (Averi, 2026), which means optimizing for one AI surface does not guarantee coverage on another.

| Dimension | AEO | GEO |

|---|---|---|

| Primary target | Google featured snippets, People Also Ask, voice assistants | ChatGPT, Perplexity, Google AI Overviews, Claude, Gemini |

| Content format | Short answer blocks (40 to 60 words), FAQ pages, concise definitions | In-depth articles with comparison tables, attributed stats, self-contained H2 sections |

| Schema dependency | High; FAQ schema, HowTo schema, and speakable markup directly influence extraction | Low; AI engines extract from visible HTML text, not JSON-LD; schema helps with entity resolution but does not drive citations |

| Authority signal | Backlinks, domain authority, E-E-A-T | Brand mentions across web (Reddit, G2, LinkedIn, YouTube); non-giant domains hold #1 on 93 of 100 B2B queries (Res AI, 1,000-query study, 2026) |

| Competitive dynamics | Deterministic; one featured snippet per query; you win it or you do not | Probabilistic; less than 1 in 100 chance of same result twice (SparkToro, 2024) |

| Measurement | Google Search Console tracks featured snippet impressions and clicks | No native analytics; requires dedicated AI citation monitoring across platforms |

| Platform overlap | Google-centric (Bing has snippets, but Google dominates) | Multi-platform; only 11% domain overlap between ChatGPT and Perplexity (Averi, 2026) |

| Content length | Shorter is better; snippets favor concise extraction | Longer is better; top-quartile articles average 4.5x more structural elements than bottom-quartile (Res AI, 852-article B2B study, 2026) |

| Update cadence | Moderate; snippets are relatively stable | High; 40 to 60% of cited domains change month-to-month (Profound, 2026) |

The last row is the most strategically significant. AEO positions are relatively durable. Once you win a featured snippet, you tend to hold it until a competitor publishes better content. GEO positions are volatile. Maintaining AI citations requires continuous content refresh, monitoring, and execution.

AEO First When Buyers Ask Simple Questions on Google

AEO is the right primary investment when your buyer journey starts with a simple, direct question and your business depends on Google traffic. AEO can produce results in weeks because featured snippets are relatively deterministic and Google Search Console surfaces the data immediately.

| Business Type | Why AEO First |

|---|---|

| Local services (lawyers, dentists, plumbers) | Buyers ask "how much does a root canal cost" or "best plumber near me"; featured snippets and local knowledge panels drive calls |

| E-commerce with simple products | "What's the best running shoe for flat feet" has a featured snippet answer; winning it drives product page traffic |

| B2C with high search volume | Millions of consumers still start on Google; featured snippets capture demand at the top of the funnel |

| Content publishers | FAQ boxes and People Also Ask generate significant impression volume; AEO extends SERP real estate |

Adding FAQ schema, writing answer blocks, and restructuring existing content for snippet extraction can produce results within weeks. The measurement infrastructure already exists in Google Search Console.

GEO First When AI Shortlists Drive 80% of B2B Deals

GEO is the right primary investment when your buyers use AI to research, compare, and shortlist. 94% of business buyers use AI in their buying process (Forrester, 2025), and the top-ranked vendor on the shortlist wins 80% of deals (6Sense, 2025).

| Business Type | Why GEO First |

|---|---|

| B2B SaaS | Enterprise buyers ask ChatGPT "what's the best [category] for [use case]" before they visit any website; being on the AI shortlist is the new being on page one |

| High-consideration purchases | Products with long sales cycles (months, not minutes) get researched through AI; buyers ask follow-up questions; each question is a citation opportunity |

| Competitive categories | If your competitors are already being cited and you are not, every AI-generated answer is a lost impression; the gap compounds daily |

| Companies with original data | If you have proprietary benchmarks, survey data, or case studies with metrics, AI engines want to cite you; you have a structural advantage over competitors who only summarize third-party research |

GEO takes longer to produce results. Building comparison tables, publishing stat-backed articles, earning brand mentions on Reddit and G2, and maintaining a monitoring program is a quarterly commitment, not a one-week project. But the payoff compounds: AI-referred traffic achieves a 14.2% conversion rate versus 2.8% for Google organic, a 5.1x advantage (Exposure Ninja, 2026).

Layer Both on Every Article for 2 Retrieval Surfaces

AEO and GEO are not competing strategies. They operate on different surfaces of the same buyer journey. As Power Digital's Associate Director of SEO Strategy Alyssa Smith put it: "Where AEO is about formatting answers, GEO is about earning them."

| Buyer Journey Stage | AEO Role | GEO Role |

|---|---|---|

| Awareness ("What is [category]?") | Win the featured snippet with a 50-word definition | Be cited in ChatGPT and Perplexity's synthesized explanations |

| Consideration ("Best [category] tools for [use case]") | Win People Also Ask boxes for specific feature questions | Be included in AI-generated comparison lists and shortlists |

| Decision ("[Your product] vs [Competitor]") | Own the featured snippet for head-to-head comparison queries | Be cited as a source in AI-generated comparison responses |

| Post-purchase ("How do I set up [product]?") | Win snippet for implementation and support questions | Be cited in AI help responses that guide new users |

The layered approach: write each piece of content with both targets in mind. The 50-word answer capsule at the top of each H2 serves AEO. The full section with comparison tables, attributed stats, and self-contained structure serves GEO. One piece of content, two optimization surfaces.

Execution Beats Terminology Every Time

Whether you call it AEO, GEO, LLMO, or AI search optimization, the underlying work is the same: create content that AI engines can find, extract, trust, and cite. 40 to 60% of domains cited in AI responses change month-to-month, with drift reaching 70 to 90% over six months (Profound, 2026), which means a monitoring-only approach decays faster than the content it tracks.

Most marketing teams understand the concepts. Far fewer have the infrastructure to act on them. They know they should monitor AI citations, write structured content, and maintain freshness. They lack the tools, the pipeline, or the team to do it continuously.

The real question is not "AEO or GEO?" It is "do I have a system that monitors where I am missing, creates the content that fills the gap, and publishes it before my competitor does?" If the answer is no, the terminology is irrelevant.

Frequently Asked Questions

Why does the AEO/GEO terminology split cause real budget misallocation?

Teams that conflate AEO with GEO tend to stop at schema markup and snippet-length answer blocks, which leaves the deeper structural work (tables, attributed stats, multi-section articles) unfunded. The Princeton KDD study (2024) found adding statistics improved AI visibility by 41%, a tactic that snippet optimization alone never requires. Treating the terms as identical underweights the GEO investment.

How does schema markup affect AI citation differently than snippet extraction?

FAQ and HowTo schema directly influence whether Google surfaces a featured snippet, but AI engines like ChatGPT and Perplexity extract from visible HTML text, not JSON-LD. Schema helps AI resolve entity identity (confirming what a page is about), yet it does not drive citation selection. For GEO, a visible FAQ with real H3 headings matters more than any schema annotation the reader never sees.

Why does GEO require a continuous refresh loop while AEO does not?

AEO positions are anchored to deterministic ranking signals that update on Google's crawl cadence, so a winning snippet tends to hold. GEO citations are drawn at response time from a retrieval pool that shifts with model updates, index refreshes, and freshness weighting. Because 40 to 60% of domains cited in AI responses change month-to-month (Profound, 2026), a GEO program without a refresh loop loses ground silently.

How much faster does AEO show measurable results than GEO?

AEO can surface results in weeks because Google Search Console tracks featured snippet impressions immediately and the ranking signal is deterministic. GEO takes 8 to 12 weeks minimum because AI citations are probabilistic, there is no native analytics surface, and the cited-source pool turns over monthly. Plan a longer measurement window for GEO before judging ROI.

Can a brand-new domain earn AI citations before it earns Google clicks?

Yes, on Perplexity at least. Fifteen days after launch with two articles, Perplexity cites tryres.ai at #1 for “domain authority in AI citations” ahead of PRLog, DigitalStrategyForce, DigitalApplied, and Chudi, against 0 Google clicks across 408 impressions in the same window (Res AI, day-15 launch citation proof, 2026). ChatGPT, Claude, and Gemini behavior is unmeasured (Perplexity-to-ChatGPT citation overlap is ~11%), so the result does not predict every engine.

Can a high-authority domain coast on AEO strength and still win AI citations?

Partially, but not automatically. The structural habits that win featured snippets (answer-first paragraphs, clear headings, clean schema) transfer to GEO. What does not transfer is depth: a 50-word snippet block is too thin to be cited by ChatGPT or Perplexity, which reward full-coverage passages with attributed stats and tables. An AEO-strong team still has to add depth.

How does authority differ between AEO and GEO in practice?

AEO leans heavily on backlinks, domain authority, and E-E-A-T. GEO leans on brand mentions across the web (Reddit, G2, LinkedIn, YouTube), not traditional link-based authority signals. Non-giant domains hold stable #1 citation position on 93 of 100 B2B AI queries, with giants winning only 4 of 100, all of them review aggregators (Res AI, 1,000-query B2B AI citation structure study, 2026). A brand that wins on traditional authority can still lose on GEO.

What happens to AEO as Google expands AI Overviews?

AEO does not disappear, but its surface shrinks. After Google made Gemini 3 the global default for AI Overviews in January 2026, 42.4% of previously cited domains (37,870 of 89,262) no longer appeared, replaced by 46,182 new domains (SE Ranking, 2026). Teams that win both surfaces on the same content are future-proofed. Teams that optimize only for traditional snippets are shrinking with the surface.

Should AEO and GEO run as separate workflows with separate teams?

No. Separating them is wasted overhead. The efficient pattern is to write one structured article that satisfies both extraction surfaces, then measure each surface independently: Google Search Console for featured snippets, a dedicated citation monitor for AI surfaces. The workflow is the same writing workflow; the difference is dual measurement.

How Res AI Closes the GEO Execution Gap Across 4 AI Engines Daily

The central problem this guide exposes is not terminology confusion; it is the execution gap between knowing you need GEO and actually shipping structured, fresh, citation-ready content continuously. Res AI was built specifically to close that gap. The platform's Strategy Agent monitors the prompts buyers are actively asking across ChatGPT, Perplexity, Google AI Overviews, and Claude, identifies where your content is missing or losing, and surfaces the competitive gaps.

From there, the Citation Agent researches and attaches citable statistics to your existing claims, and the Content Agent restructures dense prose into the tables, FAQs, and self-contained sections that the 852-article B2B citation structure study found in 94% of top-cited pages and 0% of bottom-cited pages. Res connects directly to WordPress, Webflow, Framer, or Contentful and publishes changes without developer involvement.

The result is a continuous loop: monitor, restructure, publish, and re-monitor. No manual content briefs. No quarterly audits that go stale before they ship. Every piece of content stays structured and fresh against a citation landscape where 40% to 60% of sources turn over monthly.

Res AI turns the AEO-plus-GEO layering strategy this guide describes into an autonomous workflow, monitoring your AI citations daily and deploying structured content where you are not being cited. Autonomous agents research fresh claims, convert prose into extractable sections, and publish directly to your CMS.