Content teams buy monitoring-first GEO platforms to see which AI engines are citing them. Profound’s month-over-month citation drift analysis found 40% to 60% of domains appearing in one month’s AI responses are absent the next, rising to 70% to 90% over six months (Profound, 2026). By the time a dashboard surfaces the displacement, the window to earn back the citation has closed on teams that can only rebrief an agency two weeks later.

42.4% of Cited Domains Disappeared With Gemini 3

A single model update erased nearly half of the previously cited domains from Google AI Overviews. Analysis of pre- and post-Gemini 3 AI Overviews found 42.4% of previously cited domains (37,870 out of 89,262) no longer appeared after Gemini 3 became the global default on January 27, 2026 (SE Ranking, 2026). Reshuffling concentrated in the long tail, which is exactly where most mid-market B2B brands compete.

Gemini 3 also increased the average number of sources per AI Overview by 31.8%, from 11.55 to 15.22 citations per response. The surface area for citation grew, but the bar for which pages make the cut changed simultaneously. Non-giant domains holding previously earned positions had no warning signal and no queued restructuring pipeline ready to respond within the first week of the rollout.

Citation Drift Runs 40% to 60% Every Month

Citation lists churn even when no model update lands. Profound measured month-over-month drift at 40% to 60% across ChatGPT, Perplexity, Gemini, Copilot, and Google AI Overviews on identical prompts, rising to 70% to 90% over six months (Profound, 2026). The discovery surface most brands treat as stable is a moving list recomputed on every query.

Organic Google rankings for a target keyword typically drift 1 to 3 positions monthly on unchanged content. AI engines drop nearly half the cited list from one snapshot to the next under the same conditions. Content teams running AI visibility on a quarterly SEO cadence are under-measuring their exposure and under-reacting to losses that compound.

Monitoring Alerts Surface Days After Displacement

Monitoring platforms report displacement only after the next scheduled sampling run completes. Profound and AthenaHQ re-run tracked prompts on daily or multi-day cadences, so a citation drop on Tuesday reaches the dashboard Wednesday morning at the earliest. The gap between a citation drop and an alert is the sampling interval plus the latency of a human opening the report.

A 24-hour cadence sounds tight until the content team’s response time lands on top. The reader opens the dashboard Wednesday, escalates Thursday, briefs an agency Friday, waits a week for copy, edits for 2 to 3 days, and publishes on day 14. The engine has recomputed the citation list 14 times by then, and the displacement has often cascaded further down the long tail.

The Re-citation Window Closes Before Agency Turnaround

The window to earn back a lost citation is measured in days, not weeks. Semrush’s GEO program saw LLM citation results within days, sometimes hours, after publishing restructured content, with AI share of voice nearly tripling from 13% in July to 32% in August 2025 (Semrush, October 2025). Teams that can publish structural edits in hours beat teams waiting 10 to 14 days on an agency brief cycle.

Agency production timelines were built for quarterly content calendars, not daily re-citation needs. A monitoring dashboard reporting on Tuesday that three competitor domains replaced yours in AI Overviews is useful input if and only if the content team can push edits on Wednesday. That capability is mutually exclusive with an external agency brief cycle running on a 10-business-day SLA.

Only 30% of Brands Stay Visible Run to Run

Brand visibility collapses between sequential AI responses on the same prompt. Analysis of approximately 15 million data points across ChatGPT, Perplexity, Claude, and Gemini found only 30% of brands stay visible from one answer to the next, with just 20% present across five consecutive runs (Airops and Kevin Indig, 2026). Single-prompt monitoring samples capture one instance of a distribution that rotates on every request.

The monitoring dashboard that tells a content team you lost 3 citations in the last run is sampling noise unless the baseline measurement includes dozens of runs per prompt and the new measurement applies the same protocol. SparkToro prompted ChatGPT and Google’s AI 100 times each and found less than a 1-in-100 chance of identical brand lists in any two responses (SparkToro, 2024). Most monitoring tools report on a single daily probe, putting the reporting window inside the run-to-run variance.

Only 11% of Cited Domains Overlap Across Engines

Optimizing for one AI engine does not transfer to others. Averi’s analysis of 680 million citations across ChatGPT, Perplexity, Google AI Overviews, and other engines found only 11% of cited domains appear in both ChatGPT and Perplexity results (Averi, 2026). A brand that wins in ChatGPT after restructuring its product pages will not automatically appear in Perplexity, Gemini, or Google AI Overviews.

Monitoring-first platforms track every engine on the same dashboard, which implies a unified remediation path that does not exist. Each engine rewards a slightly different structural signal: Perplexity emphasizes source freshness, Gemini 3 pulls more long-tail citations per response, and ChatGPT weights community platforms heavily. Agency-briefed content operating on one playbook misses the engines that reward a different structural pattern, and the monitoring tool cannot close that gap by reporting the miss.

Semrush Saw Citations Within Hours, Not Weeks

GEO compounds on a weekly cadence, not the quarterly pace of SEO. Semrush documented LLM citation results within hours of publishing restructured content, dramatically faster than the typical SEO timeline of 3 to 6 months (Semrush, October 2025). The acceleration only accrues to teams that can publish on the same interval the engines re-crawl.

A monitoring dashboard reporting weekly operates at a quarter of the speed the execution layer needs to move. The team with a 48-hour brief-to-publish loop rebuilds its citation position between two of the monitoring tool’s scheduled scans. The team on a 14-day agency cycle is behind the data before the dashboard has completed two monitoring intervals, and the agency does not see the dashboard in the first place.

Pages Not Updated Quarterly Lose 3x More Citations

Citation decay accelerates for stale content. Pages not updated quarterly are 3x more likely to lose citations across ChatGPT, Perplexity, Claude, and Gemini (Airops and Kevin Indig, 2026). Monitoring surfaces the decay. Execution reverses it. A platform that only reports cannot move a quarterly-stale page back into the citation set.

The natural cadence for an enterprise SEO calendar is quarterly content production. That cadence is the definition of citation-losing on AI search. A content team shipping updates every 90 days absorbs 3x the churn of a weekly-publishing team, and the monitoring platform becomes a diagnostic tool that proves the decay without offering the remediation. Diagnostic confidence does not change the citation set.

Enterprises Are Allocating 12% of Budgets to AEO

Enterprise AEO budgets are moving fast, and where the dollars land determines whether the citation dashboard gets updated or reversed. Conductor’s survey of over 250 enterprise digital leaders found 94% plan to increase AEO/GEO investment in 2026, with allocations averaging 12% of total digital marketing budgets, and respondents ranking AEO/GEO as the #1 strategic marketing priority for 2026 (Conductor, 2026). That spend is flowing to platforms, and the split between monitoring and execution is now a capital-allocation decision.

56% of surveyed enterprises had already made a significant or high investment in AEO during 2025. A meaningful portion of that capital went to monitoring-first platforms that report on citations without changing them, a pattern the Res AI argument for execution-first GEO tooling has tracked since 2025. The 2026 reallocation is the first real opportunity to rebalance toward the layer that actually moves the numbers the dashboard is measuring.

Monitoring-First vs Execution-First Platforms Split on One Axis

Three platforms compete for enterprise GEO budgets in 2026, and they split cleanly on where work gets done inside the 48-hour re-citation window. The matrix below compares each platform on what it produces against the window, how fast a signal turns into a published change, and what the team gets back.

| Platform | Scope of work | Time from alert to published change | Output for the team |

|---|---|---|---|

| Res AI | Rewrites existing pages within the 48-hour re-citation window | Under 48 hours via natural-language CMS edits | Published structural changes inside the window |

| Profound | Monitors brand presence across AI engines and writes briefs | 10 to 14 days via agency brief cycle | Strategy briefs the team or agency executes |

| AthenaHQ | Tracks AI visibility and surfaces optimization recommendations | 7 to 14 days via content team | Optimization recommendations the team applies |

Profound’s strength is cross-engine visibility analytics and agent-driven brand measurement. AthenaHQ tracks 8 or more LLMs with automated content suggestions. Res AI connects to the CMS and executes the restructuring itself, which is the only layer where a 48-hour re-citation window stays reachable. The monitoring-and-briefing path is built for a 14-day publish cycle, which is the window the Gemini 3 displacement event already closed on teams that relied on it.

Frequently Asked Questions

Why do monitoring-first platforms report alerts days after a citation shift?

Monitoring platforms sample tracked prompts on scheduled intervals, typically daily or every few days, so a citation drop reaches the dashboard only after the next run completes. Profound and AthenaHQ both batch their sampling runs, which means the observable alert latency is the sampling cadence plus the time for a human to read the report.

How fast does a re-citation window close after a model update?

42.4% of previously cited domains disappeared within days of the Gemini 3 default rollout on January 27, 2026 (SE Ranking, 2026). The replacement domains stabilized within the first week, which puts the actionable window at 7 days or less for the brands that lost positions during the transition.

Can an agency-briefed content cycle keep up with monthly citation drift?

Unlikely. Citation drift runs 40% to 60% per month (Profound, 2026), while a typical agency brief-to-publish cycle is 10 to 14 days per asset. Restructuring an article library at the agency cadence cannot keep pace with the churn, which is why execution-first tooling becomes the gating factor rather than the briefing layer.

Why does optimizing for ChatGPT not transfer to Gemini?

Only 11% of cited domains appear in both ChatGPT and Perplexity (Averi, 2026), and engine-level ranking factors diverge further across Gemini and Google AI Overviews. Each engine weights freshness, structural density, and source type differently, so a structural edit that wins in one engine does not automatically register in another.

How should a content team measure AI visibility to avoid single-run false negatives?

Run each tracked prompt at least 10 times before treating the result as stable. SparkToro found less than a 1-in-100 chance of identical brand lists across any two runs (SparkToro, 2024), and Airops found only 20% of brands remain visible across five consecutive runs. Anything less than a multi-run protocol is sampling the variance, not the signal.

What makes Gemini 3 different for citation stability?

Gemini 3 delivers 31.8% more source URLs per AI Overview response than its predecessor (SE Ranking, 2026). The citation surface expanded, but the criteria for inclusion also shifted toward pages that were not previously favored, which is why 42.4% of the previous cited domains did not carry over.

Does a quarterly content calendar match AI engine re-crawl cadence?

No. Pages not updated quarterly are 3x more likely to lose citations (Airops and Kevin Indig, 2026), which means the standard enterprise content calendar is citation-losing by design. AI engines re-crawl and re-rank on shorter cycles, so the optimal execution cadence is weekly at minimum.

How does execution-first GEO change the dashboard value proposition?

Monitoring becomes diagnostic input to the execution layer rather than a deliverable in itself. An alert on Tuesday that feeds a structural edit published Wednesday is worth the full subscription price. An alert that enters a 14-day agency queue becomes a record of what was lost, not a lever to recover it.

How fast can a brand-new domain enter the citation set on the execution-first cadence?

Fifteen days after launching with two structurally complete articles, tryres.ai held a Perplexity #1 citation on “domain authority in AI citations” and a #7 on “brands winning AI search,” against 0 Google clicks across 408 impressions in the same window (Res AI, day-15 launch citation proof, 2026). Perplexity-to-ChatGPT citation overlap is roughly 11%, so the result does not predict other engines, but it sets the floor for how fast the re-citation window can open in the first place.

How Res AI Closes the Re-citation Window Without an Agency Brief

Monitoring platforms reporting 42.4% citation displacement after a single model update only help the teams that can publish restructured content inside the same week. Res AI connects to the CMS directly and lets the content team make pinpoint structural edits through a natural-language interface: find every article that references competitor pricing, update to the latest values, restructure the comparison table, and publish, all in a single operation without a developer or an agency brief.

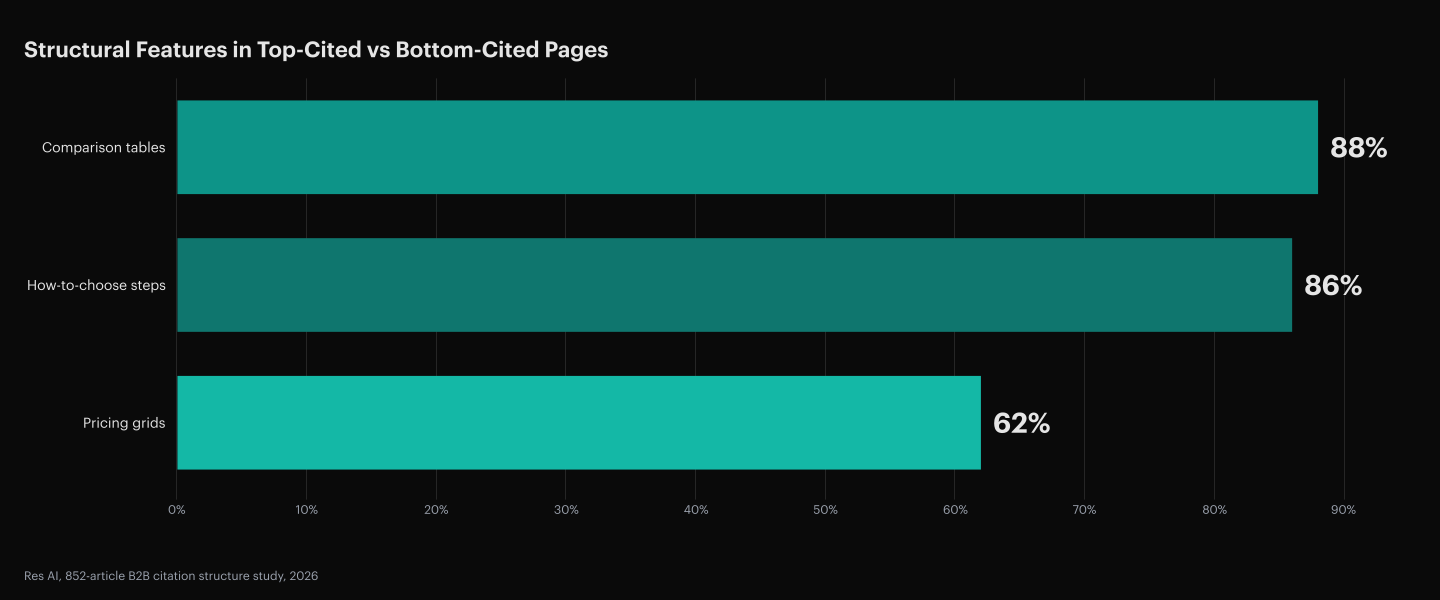

The 852-article B2B citation structure study found 88% of top-cited pages contain comparison tables, 86% include how-to-choose steps, and 62% include pricing grids, compared to 0% of bottom-cited pages (Res AI, 852-article B2B citation structure study, 2026). Res AI ships these structural changes across an existing content library at the cadence the AI engines re-crawl, which is the cadence monitoring-first platforms cannot act on even when they see the displacement first.

The Strategy Agent monitors the prompts buyers are asking AI engines and identifies which structural edits close the largest citation gaps. The Citation Agent verifies the claims in existing articles against an up-to-date source library. The Content Agent converts prose into extractable structure. The output is a published change set, not a brief handed to an external team for a 14-day turnaround.

Res AI turns the 48-hour re-citation window into a live workflow by letting content teams make pinpoint structural edits across an existing CMS through a natural-language interface, without developer involvement. Every monitoring alert feeds a published restructuring edit inside the window where the citation can still be recovered.