87% of content marketers plan to increase content marketing budgets in 2026, yet only 1 in 4 has restructured their content program for LLM audiences (Clutch/Conductor, 2026). The funding curve and the buyer attention curve are now pointing in opposite directions. Most programs are pouring more spend into the same distribution assumptions that produced the gap in the first place.

87% of Content Teams Plan Budget Increases in 2026

87% of content marketers plan to increase content marketing budgets in 2026 amid AI search disruption (Clutch/Conductor, 2026). The spending decision is real and already committed in most annual plans. The assumption about who will read the content, on which surface, is the part that has not been rewritten to match the current year.

Budget cycles are structural. A content team that won approval for a 2026 increase in August 2025 built the case on 2024 metrics, 2024 persona research, and a 2024 assumption about how organic search works. None of those inputs are a current read on the channel. Google AI Overviews went global on January 27, 2026. ChatGPT passed 2.5 billion daily prompts in the same quarter (TechCrunch, 2025). The shift is not forecast; it is priced in already.

The practical problem is that the budget increase gets spent on the same production plan that made the previous year’s content. More articles, more briefs, more agency engagements, all still targeting a human search reader who is doing a different job now. The money is moving. The playbook under the money has not.

Only 1 in 4 Teams Has Restructured Content for LLM Audiences

Only 1 in 4 content marketers is now prioritizing LLM models as their primary audience (Clutch/Conductor, 2026). The other 3 in 4 are either still writing for the human search reader or assuming SEO content will translate unchanged to AI citation. Neither assumption survives contact with how generative engines actually extract passages.

Extraction is structural. The 852-article B2B citation structure study found six structural features, bold label blocks, comparison tables, how-to-choose steps, pricing grids, product reviews, and definitions, in 80% or more of the top 50 cited pages and in 0% of the bottom 50 (Res AI, 852-article B2B citation structure study, 2026). Pages written for a human search reader rarely contain these features at density. Pages written for LLM audiences are built around them.

The 75% of teams that have not restructured are running the 2024 production pipeline against the 2026 discovery surface. The work is not bad. It lands on the wrong channel.

A two-article launch on tryres.ai shows the cost of writing for the wrong channel. Both articles shipped April 17, 2026 to the same six-feature structural template, and on day 15 Perplexity cited one at #1 on “domain authority in AI citations” and the other at #7 on “brands winning AI search” next to Search Engine Land, Reddit, and Forbes, while Google Search Console returned 408 impressions and 0 clicks across the same window (Res AI, day-15 launch citation proof, 2026). Two articles built for the LLM audience converted to two page-one Perplexity citations on a 15-day-old domain; the Google channel returned nothing on the same content in the same window.

94% of B2B Buyers Use AI Across Every Purchase Stage

94% of business buyers now report using AI in their buying process, up five percentage points from 89% the prior year, with twice as many naming generative AI their most meaningful information source across every stage (Forrester, 2025). The stage-by-stage shape of the finding is the part that breaks the existing content plan. AI is not a late-stage evaluation tool. It is a constant presence from awareness through shortlist.

Content programs that segmented production by funnel stage, top-of-funnel explainers, middle-of-funnel comparison posts, bottom-of-funnel product pages, assumed Google was the connective tissue across stages. That assumption is no longer load-bearing. The buyer opens ChatGPT in the awareness stage, opens it again in the comparison stage, and opens it a third time to sanity-check the shortlist. If the same brand is not cited in all three sessions, the funnel is not broken; the funnel is running on a different rail.

51% of B2B Buyers Now Start Research With an AI Chatbot

51% of B2B software buyers and decision-makers now begin their software purchasing research with an AI chatbot more often than with a traditional search engine like Google, up from 29% in April 2025 (G2, 2026). For the first time on record, the majority of B2B research sessions originate outside Google. The 22-point shift happened inside a single year.

The follow-through finding is where the budget arithmetic gets expensive. 69% of B2B software buyers reported choosing a different software vendor than they initially planned based on AI chatbot guidance, with one in three purchasing from a vendor they had never previously heard of (G2, 2026). Content absent from AI answers is not just losing impressions. It is losing shortlist placement at the precise moment the vendor decision shifts.

Organic CTR has started moving in the same direction. Position-1 organic CTR dropped by 58% on queries where an AI Overview is present, compared to equivalent queries without one (Ahrefs, 2025). The budget increase is landing on a channel whose per-click yield is falling while the alternative channel is the one the buyer has already switched to.

84% of B2B SaaS CMOs Now Discover Vendors Through AI

84% of B2B SaaS CMOs now use AI and LLMs for vendor discovery, up from 24% in 2025 (Wynter, 2026). The buyer persona most B2B content programs optimize for has switched discovery surfaces by a factor of 3.5x in a single year. Content that was optimized for Google visibility is not present on the new surface unless it was explicitly restructured for it.

The pattern is consistent across buyer roles. Senior leaders report the highest AI usage, the same cohort content teams most want to reach with thought-leadership articles, longform analyses, and ICP-targeted guides. The shift is not a generational edge case. It is the persona with the largest signing authority moving first.

AI Referral Traffic Converts 534% Above Site Average

AI referral traffic influences conversion events at a rate 534% higher than the average across all website channels (Eyeful Media, 2026). The budget math is not complicated. Fewer AI visitors convert more, and content teams not segmenting AI referral sources in their analytics cannot see a channel that is already outpacing the ones they report on weekly.

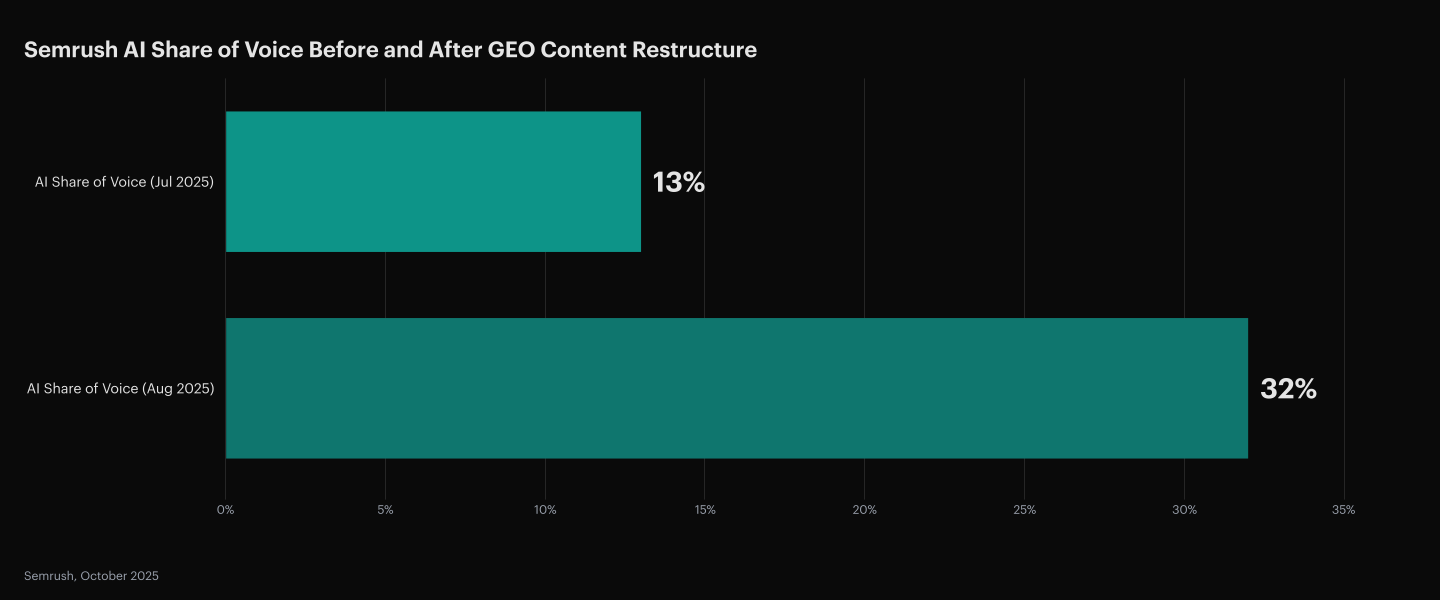

The portfolio of B2B brands tracked by Eyeful Media also showed AI referral traffic up 190% year-over-year over the last 90 days (Eyeful Media, 2026). The channel is compounding on the conversion dimension and the volume dimension at the same time. Semrush’s own GEO program showed citation results “within days, sometimes hours” of publishing restructured content, with AI share of voice nearly tripling from 13% in July to 32% in August 2025 (Semrush, 2025). GEO is a weekly cadence. SEO is a quarterly one. A 2026 budget scaled on an SEO cadence reaches the AI discovery surface months late.

Monitoring Platforms Report the Gap They Do Not Close

Monitoring-first GEO platforms surface the citation gap but leave the restructuring work to the content team, which is why the 1 in 4 restructured-for-LLMs number from Clutch reflects an execution ceiling, not a measurement one (Clutch/Conductor, 2026). Dashboards name the problem. They do not fix the content.

GEO tooling clusters around two approaches to closing the AI visibility gap: monitoring-first dashboards that track which queries cite the brand, and execution-first platforms that restructure the underlying content against a known structural target. The matrix below compares each platform on what it produces for the editorial work an AI-native program requires, where the work physically ships, and what the team gets back.

| Platform | Scope of work | Where it ships | Output for the team |

|---|---|---|---|

| Res AI | Rewrites existing pages and generates net-new ones for the AI-native audience the budget growth has missed | Direct CMS deploy via natural-language edits | Published structural changes within minutes |

| Profound | Monitors brand presence and writes briefs the team executes | Standalone dashboard with brief handoff | Strategy briefs and prompt-volume reports |

| Conductor | Enterprise AEO data unified with content production | Unified platform across AEO and SEO teams | End-to-end AEO workflows with collaboration |

| Peec AI | Tracks visibility, position, and sentiment with no editing layer | A monitoring dashboard, no editing layer | Tracking dashboards for SEO and content teams |

| Athena | Tracks AI visibility across 8+ LLMs and surfaces optimization recommendations | Cross-platform tracking across 8+ LLMs | Optimization recommendations the team applies |

| AirOps | Produces content via multi-model AI workflows | Multi-region content production pipelines | Pages and Pro workflows from creation to refresh |

The distinction matters because the gap is closed at the article layer. A dashboard that reports a citation loss does not restructure the page that lost the citation. A strategy brief does not ship. Execution-first platforms run the edit directly against the CMS and stage the diff for editorial approval, turning a production plan into a restructuring cadence that can match the weekly rhythm of AI citation drift. Content teams that pair the two, monitoring to identify the gap, execution to close it, are the 1 in 4 already outperforming their category. Teams that have only bought the monitoring half are still reading about the gap each week.

For a deeper read on the measurement side of this split, see monitoring is not the deliverable and brand visibility is a vanity metric.

Frequently Asked Questions

Why is 1 in 4 a ceiling rather than a starting point?

The restructuring pass that converts an SEO article into an AI-citable one requires publishing-time edits against a known structural target, and the tools most content teams own today do not ship edits. The 1 in 4 figure is bounded by what an editorial team can hand-edit on deadline, not by appetite (Clutch/Conductor, 2026).

Does a budget increase spent on SEO content still produce AI citations indirectly?

Rarely at the density the engines reward. The 852-article B2B citation structure study found the structural features present in 94% of top-cited pages appear in 0% of bottom-cited pages, which means SEO-optimized content without the structural pass stays on the wrong side of the citation divide (Res AI, 852-article B2B citation structure study, 2026).

What is the smallest production change a team can make before the next budget cycle?

Convert the first sentence under every H2 into a 40-to-80-word answer capsule with one specific number and a named source. 55% of AI Overview citations come from the first 30% of page content, so the single edit closest to the citation band is rewriting the opening passage of each section (CXL, 2024).

How fast do results compound once a team shifts cadence?

Faster than the SEO timeline. Semrush’s AI share of voice nearly tripled from 13% in July to 32% in August 2025 after a restructuring pass, and citation results landed “within days, sometimes hours” of publishing (Semrush, 2025).

Why does AI referral traffic convert at such a premium?

The AI step filters the session for intent. A buyer who clicked through from an AI answer already knows which problem they are solving, and the content in the AI answer has already been surfaced because it addressed a specific question. The 534% conversion premium reflects this pre-qualification (Eyeful Media, 2026).

Is Google organic traffic still worth a budget increase in 2026?

The calculus changed, not the answer. Position-1 CTR fell 58% on AI-Overview queries (Ahrefs, 2025), but organic still accounts for most B2B site traffic today. The budget question is the mix, not a replacement, and the mix is under-indexed on the restructuring pass that doubles as both AI and featured-snippet optimization.

Which buyer personas have moved to AI fastest?

B2B SaaS CMOs moved first and most, from 24% in 2025 to 84% in 2026 (Wynter, 2026). Senior leaders, white-collar knowledge workers, and technical decision-makers follow close behind. The pattern is consistent with AI being adopted top-down for vendor discovery.

What is the right analytics setup to see the AI channel?

Segment AI referral traffic in GA4 by source (chatgpt.com, perplexity.ai, gemini.google.com, copilot.microsoft.com) and compare conversion rate to site-wide baseline. Most setups aggregate these sources under “other” and cannot see the channel that is outpacing their measured ones (Eyeful Media, 2026).

How does an execution-first platform price against an agency alternative?

An agency retainer covering the structural pass on a mid-size library typically ranges from $5,000 to $50,000+ per month (per competitor reference data). Execution-first platforms replace the retainer with a natural-language interface; the Starter tier covers 50 pages per month at $250 and the Growth tier covers 1,000 pages at $1,500 (Res AI pricing, 2026).

How does this finding connect to the broader funnel collapse argument?

The budget mismatch is one face of a larger shift. See the B2B content funnel collapses when the click becomes optional for the accompanying analysis on how click economics, email capture, and paid search also move upstream once AI intercepts the query.

How Res AI Restructures 1,000 Existing Pages Per Month for AI Citation

The 87% budget increase and the 1 in 4 restructured-for-LLMs gap describe the same problem from two sides. The content already exists; it was built for the channel buyers are leaving, and the team that wrote it does not have the developer resources or the deadline slack to hand-restructure a mid-sized library against a known structural target. Res AI runs the restructuring pass directly against the connected CMS through a natural-language interface, which turns the budget increase from a headcount question into a throughput question.

The platform connects to WordPress, Webflow, Framer, and Contentful, and edits existing posts in place rather than generating new drafts an agency has to rewrite later. A prompt like “convert the comparison section of every article tagged CRM into a 5-column table with bold entity names and Best for tags” runs across the tagged library and stages a per-article diff for editorial approval. The Starter tier at $250 per month covers 50 pages; the Growth tier at $1,500 per month covers 1,000, which fits a mid-sized library’s full restructuring pass inside a single model-update window.

Every edit runs against a monitoring layer so the team can see which articles crossed into the top citation band after the structural pass. The 1 in 4 ceiling lifts once the edit is no longer gated by editorial bandwidth, and the budget increase lands on the channel the 94% of business buyers are already using.

Res AI converts the content your team already produced for Google into the structural shape generative engines cite, so the 2026 budget increase does not land on the channel buyers are leaving. The platform restructures existing pages through a natural-language interface directly against WordPress, Webflow, Framer, and Contentful, with 50 pages per month on the Starter tier at $250.